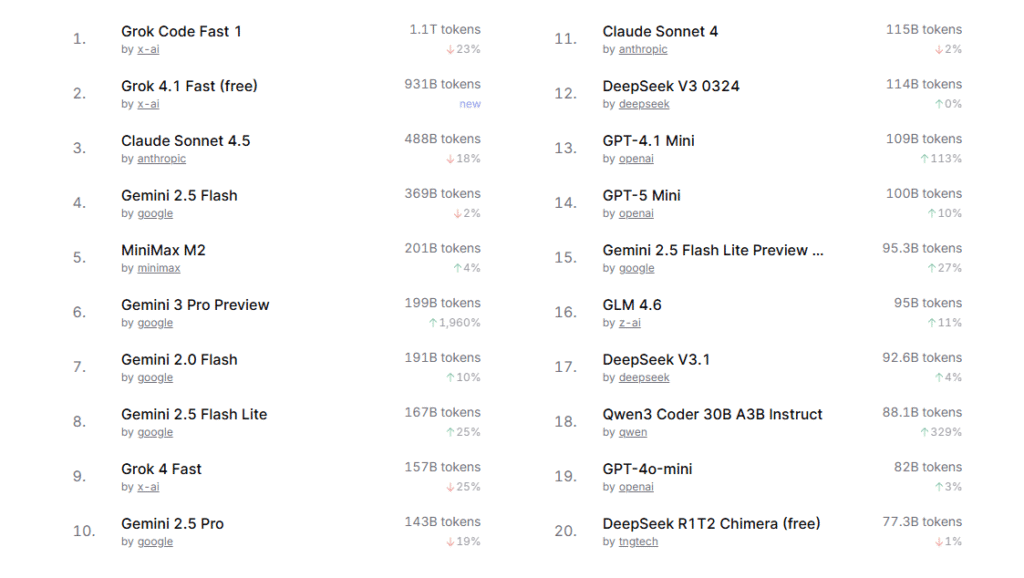

In a significant development for the AI developer community, Grok 4.1 Fast has been crowned the leader in Python benchmarks for programming and is edging away from xAI’s coding-specific benchmark, Grok Code Fast 1, and is firmly second. This is among the most noteworthy leaderboard reshuffles in recent times and demonstrates the increasing competition among the most powerful coding models.

Community leaderboards, industry assessments, and independent tests for developers began appearing soon after the release of Grok 4.1, consistently showing the Fast variant outperforming the competition across a variety of realistic Python workflows. While code-specific models have traditionally dominated these tests, Grok 4.1 Fast’s architecture seems to have tilted the balance in its favor.

Designed for high-speed Reasoning and Workflows with Agents

Grok 4.1 Fast is designed to be a high-throughput, low-latency model that is capable of handling large context windows. It supports context lengths up to two million tokens and allows developers to load massive log files, large codebases, and multi-file refactoring tasks in a single session. This is a significant improvement in engineering applications, where context fragmentation can reduce precision.

In addition, the model’s enhanced tool-calling capabilities, seamless integration with web search, code execution, and multi-step workflow orchestration, play a key role in its performance. These capabilities let it operate more like an autonomous coding assistant than the traditional prompt-in-and-text-out model.

This agentic behavior, backed by the speed of its responses, is believed to be among the key advantages that made Grok 4.1 Outperform even the most sophisticated codes across various benchmark categories.

Grok Code Fast 4.1 remains highly Competitive

Even though it has lost the top spot, Grok Code Fast 1 still delivers solid performance and maintains a decisive advantage over other programming models, especially in fast-paced, cost-effective workflows. Designed explicitly for programming tasks, it continues to provide reliable results in situations that require frequent, lightweight generation, such as code suggestions, pattern corrections, and fast prototyping.

Its cost-effective pricing and execution speed make it a viable option for large-scale deployments that require thousands of code tasks to be executed per hour. Many engineering departments still consider Grok Code Fast 1 the ideal combination of cost and capabilities.

Benchmark Results vary, but the general Pattern is Apparent

Although benchmarks for coding differ in terms of methodology, in the range of HumanEval or MBPP to real-world GitHub-issue simulations, the emerging trend has Grok 4.1 Fast consistently close to or even at the top in several categories:

- High context understanding that allows sophisticated cross-file reasoning

- Excellent tool-use fluency, especially in multi-step Python debugging

- Effective error correction regardless of the noisy and poorly written environments of code

- The ability to make a general sense, which is often associated with better coding accuracy

But experts warn that no single model is the best for each test type. Some experts in the field have smaller gaps between the two Grok models or choose to use other systems that are precision-optimized for coding in precision-based comparisons.

What does this mean for Developers?

The most recent results indicate a change in how AI coders are evaluated. Instead of focusing on function-completion accuracy, developers now consider the entire process of productivity and include:

- Speed of execution

- Context capacity

- Tool integration

- Reliability throughout long sessions

- Cost per request

Grok 4.1 Fast’s impressive performance suggests that models designed for reasoning with agents, not for text generation, will be more widely adopted in engineering and business environments.

Teams that choose from the two Grok versions will make their choice based on the workload requirements:

- Select Grok 4.1 Quick for more complex, multi-file Python tasks as well as deep debugging and automated workflows.

- Select Grok Code Fast for high-volume, cost-effective code generation in which speed and scalability are more important than reasoning with more depth.

Outlook: A rapidly changing Code-model Landscape

The development of Grok 4.1 Fast underscores a new direction for AI development, where generalist models armed with sophisticated tool-calling capabilities and massive context windows are beginning to outperform special coding models. As the larger LLM industry continues to release multimodal, tool-integrated, and reasoning-oriented platforms, the competition for benchmarks based on coding is set to rise further.

Adoption by developers and further independent tests in the upcoming weeks will provide more clarity on the extent to which Grok 4.1 Fast’s performance is stable across more extensive real-world scenarios. At present, this model’s performance marks an essential milestone in the race to create the most efficient AI coder.

FAQs

1. Is Grok 4.1 Fast now the best model for Python programming?

It ranks first in many vital benchmarks and community-run benchmarks, including those that emphasize reasoning and long-term context, as well as workflows driven by tools. However, the results differ depending on the benchmark type and the task.

2. What is the Grok Code Fast 1 stack up?

It’s a quick, cost-effective coding method that is highly effective for the most demanding programming tasks. In the majority of production tasks, it is the most cost-effective option.

3. Do teams need to switch their teams immediately in Grok 4.1 Speed?

But not without evaluating. Engineering teams must test each model against their actual tasks, such as debugging, reviewing code, refactoring, or unit test generation, to determine the appropriateness and effectiveness, as well as cost.

4. Does the wider context window really matter?

Yes. The ability to import a complete repository or multi-file structures into a single session is among Grok 4.1 Fast’s primary benefits for Python engineering tasks.

5. Are these benchmarks generally recognized?

The benchmarks for each vary, and some are focused on narrow code tasks. The majority of them favor Grok 4.1 Fast; however, no single model can be used for every possible test.

Also Read –

Grok 4.1 Fast: Full Breakdown of xAI’s New High-Speed Agent Model